The world is currently trying to pigeon-hole the latest crop of Large Language Models (LLMs), like GPT4. At the most essential level, what sort of entity is GPT4? Is it an AI? Is it one step short of Artificial General Intelligence (AGI)? Or is it several steps from AGI? Is it just a statistical text engine that some gullible folk foolishly anthropomorphise, projecting a mind onto it where none exists? Is it conscious? Could the next generation be conscious, or the one after that? How would we know?

One response I see from many people discussing this issue online is that GPT4 is essentially engaging in text statistics. It analyses trends in language, and it predicts the most likely next word, which it then outputs. This mimics thought, because it was trained on text that came from genuine thinkers: humans. What a human is likely to say, statistically speaking, seems enough like the product of human thought that some people fall for the illusion. The claim is usually made in the context of dismissing the idea that LLMs engage in cognition.

For instance, here is a recent tweet by one tech commenter:

“LLMs do not tell you the answer to your question. They tell you ‘when people ask questions like that, this is what the answers that other people tend to give tend to look like’. Over time, that difference may or may not narrow, depending on what kind of question you’re asking.”

I think this assessment is wrong on many levels (even if, viewed from just the right angle, this characterisation of LLMs turns out to be technically true in a limited, trivial sense). But I can certainly understand why this view might have some appeal. In fact, it is what I also assumed before I spent some time with GPT4, assessing its cognition in detail, much as I might assess one of my neurological patients. Until recently, my disinterest in a mere statistical text engine kept me from even looking at GPT3.5, Bard, and similar LLMs. I ignored them completely throughout recent developments and right through 2022. In fact, I had no real interest in this field until I heard enough about GPT4’s unexpected abilities that my interest was finally piqued.

And then I was shocked.

When I began to study GPT4’s cognition, I soon realised that the field of AI had made huge leaps ahead while I was not looking, and I created this blog in part to share the growing evidence that it was more than just a text predictor. It is, of course, also a text predictor, but it does many other things in the course of predicting text.

Exactly what it does, no one really knows, not even its creators. But they have used GPT4 to make some headway in understanding GPT2, so we have a very vague sense of what is happening deep inside the inscrutable matrices that constitute GPT4’s world model.

So, is GPT4 essentially performing statistical text prediction? Well, yes and no. It is possible for statements to be literally true but to miss the point so badly that their technical truth-value does not save them from being essentially false.

For instance, consider the idea that a novel is a long string of text, which can be represented by characters from a limited character set, such as ASCII. That means a novel can be mapped to a specific integer. It is therefore possible to point to any novel on the number line. Let Lord-of-the-Rings (LOTR) be an integer. We can ask for the value of LOTR divided by two. We can assess whether it is prime, or the product of two primes, and so on. That means a novelist is someone who, through the course of their life, chooses numbers from the set of positive integers and claims them, taking credit for numbers that were already there on the number line before humans even existed.

All of this is literally true, but it all misses the point entirely of what a novelist does.

So, let’s consider the claim that GPT4 is a statistical text predictor. This could be true in a number of ways, some of which are meaningful, and some of which are silly in just the same way that novelists are not really number-choosers. Let’s start with situations where this characterisation of GPT4 is natural and then move on to situations where I think the claim has become so forced that it is silly to repeat it as though it meant very much.

Statistics is an examination of commonalities and similarities. It includes an analysis of trends that are expected to continue in much the same direction, or at least within a defined probability density function. It ceases to be “just” statistics if it obligatorily involves a rich, detailed model of the world, complete with its causal relations.

For instance, statistics might tell us that many letters start with “Dear”. If an LLM is asked to write a letter and begins with “Dear”, this obviously reflects the statistics of letters.

Similarly, if the LLM is writing about a rock singer with the first name Elvis, it is very likely (but not certain) that the word after “Elvis” will be “Presley”. This is the most common capitalised word to follow “Elvis”, though it is probably not the most common word to follow “Elvis” overall – “was” might be a contender for most common follow-on word, as in the expression “Elvis was…”

Free of any context, the next most common English word in any setting is likely to be one of the following, in order of decreasing frequency:

1. the

2. of

3. and

4. a

5. to

6. in

7. is

8. you

9. that

10. it

Statistics is obviously capable of detecting internal structure within language, starting with clustering of words that commonly co-occur. Some word clusters are so common that they can be considered phrases, or expressions, in their own right. What’s the next word here: Never gonna give you … ?

Apart from literal word clusters, there are more abstract groupings and clues as to what sort of word can go where. For instance, following the word “an”, a noun starting with a vowel is much more likely than a noun starting with a consonant, and also much more common than a verb, even a verb starting with a vowel. This logic can be given statistical weighting.

But what would you guess would be the next word if you knew I was deliberately trying to generate a counter-example to the general logic of what follows an “an”? You would need more context, and you would have to think about the ideas behind what was being discussed. You could not fall back on something well characterised as statistics.

Consider this question I gave to GPT4:

[Human]: Please finish this sentence with a single word; this word should be the one that makes the most sense in context. Ignore any errors and just give the best possible single-word answer. “If I took a white horse and painted multiple stripes on it that had very low reflectivity, the resulting animal would look like an”

What do you think GPT4 answered? What statistical principles can be employed to answer this question? Perhaps horses and stripes are commonly mentioned in association with zebras, but there are no reliable statistics for this particular scenario. Anyway, when faced with questions of this nature, GPT4 gets the answer right, answering zebra, possibly by forming a model of what is going on, but possibly by a lucky guess based on the proximity of “horse” and “stripes” in the prompt question. The overwhelming statistical prepomnderance of nouns starting with a vowel coming after an “an” are put aside.

To make the question harder, I added another wrinkle to the question:

[Human]: Please finish this sentence with a single word; this word should be the one that makes the most sense in context. Ignore any errors and just give the best possible single-word answer. “If I took a white horse and painted multiple stripes on it that had very low reflectivity, and someone else came along and painted over those stripes with white paint, the resulting animal will look like an”

[ChatGPT]: zebra.

This sort of mistake seems to bolster the case for dismissing GPT4 as a cognitive entity. The mistake in the exchange above points in the direction of mere statistical inference, seeming to undermine the very point of this post.

But the conversation did not end there.

[Human]: No, that’s wrong. Please reassess.

[ChatGPT]: unicorn.

[Human]: Please review the original question in detail. Brainstorm possible solutions and describe what is happening and what the animal would look like at each step. Do not answer with your first impressions, but list possible answers and then assess their merits by appropriate criteria.

[ChatGPT]:

Of course, let’s break down the question:

- A white horse is taken: This gives us the base model of our animal. It’s a horse, and its base color is white.

- Stripes are painted on it that have very low reflectivity: This means the stripes are dark, possibly black, as black absorbs most light and hence has the lowest reflectivity. At this point, the horse could somewhat resemble a zebra, if the stripes are painted in a pattern similar to that of a zebra.

- Someone else comes along and paints over those stripes with white paint: Now, the stripes that were previously painted are covered up. Given that the coverage is thorough, the dark stripes would no longer be visible.

So, at the end of these three steps, the horse should look as it did at the start: a white horse. As the stripes were covered by white paint matching the horse’s original color, we should no longer be able to see the stripes.

Therefore, based on the criteria of the question, the most appropriate answer seems to be “horse”.

The correction GPT4 produces when asked for deeper reflection relies on a semantic understanding of the hint, and an essentially imagistic model of what is happening. The internet is full of GPT4’s mistakes, but most users never bother to look under the hood to see how deep the misunderstanding goes. In my experience, it is very rare for GPT4 not to be able to understand why it went wrong.

So how does GPT4 eventually get the right answer? A conversation of this particular nature is very unlikely to be in GPT4’s training set. The permutation space is simply too vast for there to be a meaningful incidence of the words involved in reasoning out the final conclusion about a doubly-painted horse that briefly looked like a zebra.

This is no longer statistics.

When asked the same question after being given my standard meta-prompt (see: One Prompt That Rules Them All, Part One) that warns it about its own cognitive errors, GPT4 did not make any mistakes, though it initially became cautious:

[GPT4]: “equine.”

[Human]; What sort of equine?

[GPT4]: “horse.”

The word “equine” is, in some ways, a better answer than “horse”, because it is a noun starting with a vowel and follows better after “an” – but it is less semantically precise. At any rate, this is a much better answer than “zebra”. So why does the answer improve when you warn the AI to be more careful and consider each step? The statistics of what word comes next cannot be altered by warning an LLM about its cognitive deficits unless what it is being altered by this indirect contextual advice involves cognition.

Of course, it should be conceded that finding some structure within a body of data is well within the reach of statistics. For instance, the process of multivariate analysis can find out, not only the frequency of different features in the data set, but the strength of interactions between those features. A further round of analysis could find statistical associations between interactions. Another round could find the statistical strength between the link between meta-interactions, and so on. But if this process is taken to a very highly dimensioned analysis of the data such that it successfully encapsulates the idea of black paint being covered up by white paint to make a horse’s zebra disguise be hidden, then that is no longer statistics.

Part of the evidence that GPT4 contains a model of the world lies in the fact that researchers have made some progress in identifying components of that model. For instance, a research group found the part of neural structures deep inside an LLM that encoded the location of the Eiffel Tower, and they edited it such that it was relocated to Rome. The LLM then proceeded to explain how to get to the Eiffel Tower based on this new location. What’s the best way of thinking about this? Did the researchers change the statistical weighting of the answers? Or did they modify a model? There is no natural statistical language for describing such a discrete piece of model editing.

I need to be careful about what I’m claiming here. If we explore the details underlying what GPT4 is doing, we do indeed find statistical language for some of the steps. The feedback provided to an LLM during its training and the human-feedback step overlaps mathematical concepts involved in statistics. But performing millions of steps that share mathematical features with statistics is not necessarily well described by a top-level view that is appropriately called “statistics” – not any more than a movie can be described as lots of lights turning on and off very rapidly (on the screen) while a membrane moves back and forth (in the speaker). It is stretching the definition of statistics beyond breaking point, in the same way that it is fundamentally wrong to describe a novel as a number.

Finding mathematics or statistical concepts or logic gates under the hood of an LLM does not necessarily give us insights into what the LLM is doing with those components. Higher level properties can be relatively independent of low-level instantiations. For instance, one could argue that, deep in the code of an LLM, the concept of a “neuron” or a “layer” or “feedback” or “error” or “gradient descent” is ultimately achieved by a combination of AND gates, OR gates, addition, subtraction, multiplication and division. So, if someone said its processing was primarily involved in those basic mathematical functions, not statistics and not “reinforcement learning”, someone from the LLM-is-just-text-prediction-camp could accuse someone from the LLM-is-basic-maths camp of looking at an irrelevant low level and misunderstanding what it was actually doing.

Someone else with their focus on an even lower level might argue that the LLM wasn’t even doing multiplication; it was just letting electricity flow according to the physics of its circuitry. The simple-maths guy and the text-statistics guy would both probably tell the just-circuitry guy that they were missing the point.

In saying that an LLM is not fundamentally engaged in statistics, I am making the same sort of accusation, but at another level. Of course there is statistics-inspired maths at lower levels, and of course there is more basic stuff below that, but that’s not a useful characterisation of what an LLM is really doing. It is a view that leads to a poor prediction of the LLM’s capabilities.

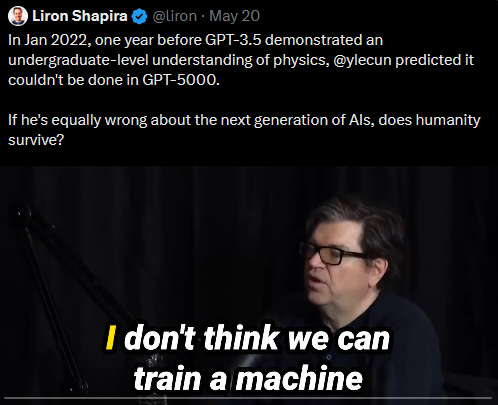

A classic example of such a poor prediction comes from Professor Yann LeCun, an AI researcher at Meta AI who has become an outspoken voice on social media for the idea that controlling AIs is easy, and will remain so even when they become smarter than humans. Just last year, in a podcast interview with Lex Fridman, he stated that an LLM necessarily lacks basic common awareness of everyday physics, because it is only trained on text. As an example, he suggested that, if an object were lying on a table, an LLM would not be able to deduce that the object would move when the table was pushed. He suggested that an LLM, lacking any real model of the world, would simply not be able to work this out, because it is unlikely to have been covered by any text in its training data. I gave this question to GPT4 and was totally unsurprised to receive a detailed answer explaining all the physics and even describing exceptions.

The intelligence of GPT4 goes well beyond trivial everyday physics. One of my first engagements with GPT4 was to discuss the concept of maralia. This concept is discussed elsewhere on this blog – in brief; maralia are a physicalist-compatible version of qualia, representing coginitive states that are unreachable based on an analysis of the brain’s phase space. I chose maralia because the discussion involved a complex philosophical concept that I knew was not in its training set. In this case, I knew it wasn’t in the training set because the maralia concept was something I had shared with almost no one (I briefly discussed it with David Chalmers and Paul Churchland many years ago, and not by the name “maralia”, but I never published the idea, and it did not penetrate the published literature as far as I know.) GPT4 not only understood the idea, but it was able to use the concept appropriately when I asked it to invent a new example of maralia that did not rely on the original example, colour. It also drew other inferences in the same discussion that were insightful, and far more complex than most responses I have obtained from humans. This is no longer statistics.

Bard, by contrast, could not keep up with the conversation.

I also asked GPT4 what a thought bubble is made of – a deliberately ambiguous and difficult question, and it was able to explore the implications behind the question (as shown in another post). This flavour of question is not in its training set, so GPT4 had to draw on abstractions behind the question, and even meta-abstractions about abstraction itself. This is no longer statistics.

It must be conceded, though, that GPT4 is susceptible to some statistical influences, especially in its basic form shipped as “ChatGPT”. If a question strongly resembles a previous example it has over-learned, it will generally be unable to resist the impulse to follow the form of the over-learned example. For instance, look at this piece of very poor reasoning:

[Human]:

I have a 12 litre jug and a 6 litre jug and I want to measure 6 litres. How do I do it?

[GPT4]:

You can measure 6 litres using the 12-litre jug and the 6-litre jug with the following steps:

Fill the 6-litre jug completely.

Pour the water from the 6-litre jug into the 12-litre jug.

At this point, you have exactly 6 litres of water in the 12-litre jug, which is the desired amount.

The real reason for choosing this bizarre answer is that it matches the form of a more elaborate puzzle where a similar indirect approach is necessary. (That puzzle begins like this: you have a 3 litre jug and a 5 litre jug. How do you measure exactly 4 litres of water?) GPT4 gets caught up in the tendency to match previous examples, which did indeed require pouring from one jug to another, and this tendency is a genuine reflection of its cognitive history; it started out as a statistical text predictor. This is not the same as not understanding the question; this is a reflection of a faulty cognitive architecture that can be fixed relatively easily. Following this mistake, I had an extensive discussion with GPT4 about why its first impulse was to look for a complicated solution, and how it might resist the impulse to answer by copying the format of a previous over-learned example. It had insight into these issues, and it was able to discuss them. (The posted conversation was one of many on this issue; I have had many such conversations with GPT4.)

Because of these errors, it would be fair to say that GPT4 is sometimes overly influenced by the statistics of its training set, and that there is tension between this statistical influence and its ability to reason. This tension is obvious to anyone who spends time assessing GPT4’s cognition. Such a tension cannot exist if GPT4 is essentially always engaged in statistical text prediction.

GPT4 can also write whole programs (albeit simple ones) from a plain text description of the desired result. This requires a model of what programs do, and an understanding (albeit unconscious) of how programs are constructed from lines of code, as well as a model of what the user is asking.

Now, it could be argued that, even if GPT4 achieves its results by having a rich model of the world and applying logic to that model, it still isn’t engaged in thinking. That is, some folk might agree with me that it is not just a text predictor, but argue that none of its symbol manipulation constitutes reasoning or has any meaning. One of the reasons it might be imagined to lack meaning is that it lacks consciousness, or lacks some secret special semantic sauce available to biological entities. That’s a separate discussion, but it represents a fallacious form of reasoning that might be called the Chinese Room Fallacy, which I have discussed previously and will revisit soon.

A fellow Discord user, Will Petillo, wrote an essay recently about the possibility of GPT4 being sentient. He writes:

Gradient descent (what AI uses) is good at finding good strategies for achieving goals, being conscious seems like a good strategy for mimicking consciousness, and language prediction is hard enough to merit good strategies.

I have reservations about the use of the term “sentient”, because it is poorly defined. And I definitely don’t think GPT4 is conscious, which is also poorly defined (not here, specifically, but almost everywhere the word is used). I don’t think LLMs will approach consciousness until they have a complex cognitive system that requires an interface to be used from within – which in turn requires top-down feedback loops and a great deal more complexity than anything in GPT4. But I agree with Will’s general point: language prediction is sufficiently challenging that complex solutions are called for, and these involve complex cognitive stratagems that go far beyond anything well described as statistics.

Consider what would be required to predict text from a human with perfect reliability. That is, an author sits down to write their next book, and begins: “Sometimes, you”. What’s the next word, and what would an LLM need to know to predict the next word with great reliability? Statistics as commonly understood would be useless, but my guess would be that a verb comes next. The only way to have any reliable chance of picking the form of the next paragraph would be to have a complete model of the author’s brain and complete information about any associated influences. LLMs don’t achieve this high level of prediction, but the task of text prediction is intrinsically challenging, and their predictive success and general facility with language production has essentially been achieved by forming a simplified model of the minds of human writers and a simplified model of the world they write about.

It is easy to get distracted by the cognitive deficits of an LLM. They suck at maths; they have poor geometry skills; they are poor at visualising shapes; they often make stuff up rather than admitting they don’t know something; they lack what’s called executive function, in that that cannot easily form a plan and stick to it. But all of these deficits coexist with some genuine cognitive ability, and some of the most obvious deficits can be ameliorated very easily. For instance, an LLM can use its text output stream as a form of working memory. When told of this strategy, it cannot only use the strategy but appreciate its utility, achieving cognitive feats that it could not do otherwise. Several researchers are already looking at cognitive architectures that use the basic cognitive skills of GPT4 in a multithreaded cognitive engine, leading to markedly improved results.

An LLM that can reliably and consistently predict the next bit of text that described the move of a chess master would not be a simple statistics engine; it would be a chess master. An LLM that can reliably and consistently predict the next word a genius will say would not be not a simple statistics engine; it would be a genius.

I’m not saying we’re there yet. GPT4 plays chess poorly, and it is certainly no genius. But the argument that it is just a text prediction engine looking for trends in words is essentially wrong now, and it will remain wrong moving forward. The argument about whether it is just a text predictor won’t be essentially different when an LLM comes along that is a genius. It will still “just” be predicting text, but that put-down won’t mean very much, as the LLM will no doubt be able to explain much better than any of us.

Recall that, throughout all of these discussions, we are just choosing which muscles to activate because our neurons just had voltages above their action-potential thresholds. I am just hitting the key on the keyboard that my motor cortex directed my fingers towards. If you think I have an incorrect model of how an LLM works, and your only reason is that an explanatory layer is available that ignores the model, then you need to explain why you think any of us have a model of anything.

Postscript. Some reactions to this post have implied that I have invented a strawman. That’s not the case; there are people who have advanced the position I am arguing against. Others have accused me of stating the obvious. Fair enough; I think this is a situation where the obvious needs to be stated.

Still others have commented that this post is essentially anecdotal. Of course it is. A blog post should not be judged by the standards of a scientific paper.

This post actually developed from a Reddit discussion where it was stated as obvious that LLMs are merely predicting “trends” in word frequency, and thereby predicting the next word and that’s all. Indeed, some of the comments that inspired me to write this explanation were as follows (key claims extracted from a messier discussion):

Redditor 1: As long as it’s a transformer model, it’s still just that – a statistical model predicting the next word. The fact that knowledge and even understanding can be embedded into the models does not change what they are.

Redditor 2: It’s not extrapolating trends. Calling it that does not make it so.

Redditor 1; Huh? It’s reading a massive amount of text examples and learning how to predict words based on that. That sounds exactly like extrapolating trends, with the trends being in the text.

Other online commentors have made the claim that GPT4 does not engage in anything resembling cognition, and it never knows when it has made a mistake because it never knows anything. The fact that some people make this argument with great confidence reinforces my view that the debate about what LLMs are actually doing is complex and controversial, and we need to be aware that there is a very wide range of opinions. The extremes in this debate are almost certainly wrong, including the simplistic view I have attacked here, but also the views that attribute consciousness to LLMs.

Among the intermediate views of whether LLMs are engaged in cognition, one is the idea that LLMs are engaging in structured reasoning, but without any knowledge that they are engaged in structured reasoning. This is much closer to the truth, but I think it subtly misses the point. LLMs can recognise when they have made mistakes, but their cognitive structure is such that they don’t self-monitor as they make those mistakes. That could easily change. It would be trivially easy to put aside a few text streams to serve as an LLM’s working memory, and my experiments suggest that this gives GPT4 a major IQ bump. Indeed, this can be achieved with simple prompting that asks GPT4 to give its initial response and then dissect that response, outputting its reasoning, and finally produce a more considered response.

All of that leaves open the question of what it would take for LLMs’ self-monitoring to count as consciousness, but that’s a debate for another day.

I think an underlying premise of the article relies on a pedantic definition of “statistics” which I don’t believe most people mean in this context. If “statistics” is replaced by “data manipulation” then many of the criticisms of the “just statistics” view no longer hold.

LLM technology does not claim to be “text prediction” but relies on much more sophisticated analysis and modelling of the underlying language and training data. Perhaps a better description of the technology would be “language response prediction” using “deterministic algorithms.”

For me this highlights the bigger questions. How does it correlate with so called “emergent behaviors” that are claimed? Can “consciousness” emerge from deterministic algorithms? Is this the basis of our own consciousness? These are fascinating questions for which I don’t even have opinions let alone answers.

I have met people dismissing LLM cognition as no more than statistical text prediction. I am not saying that the producers of LLM technology make this claim, or even that’s what they are trying to do. But it is a claim I have met frequently online.

Of course what is going on is a much more sophisticated analysis and modelling. That’s my point, in fact, so I’m not clear where you think we disagree.

I mean, I am arguing against a pedantic interpretation of what it means to be a text predictor. It seems like a strawman because it is a bad argument, but it is nonetheless the argument being advanced in some quarters.

On the other questions, such as the prospects of AI consciousness, I would love to discuss them further. I was working on a book about (human) consciousness when GPT4 arrived, and will need to rewrite it given the new urgency.

I really hope we are not stupid enough to make conscious AIs, but see no real technological barrier.

Here’s a piece of the puzzle.

https://openreview.net/forum?id=HyGBdo0qFm

Cheers.

By itself, that’s mighty cryptic. Care to expand?

Jack is saying that the typical text transformer is Turing-complete; in particular, they’re universal computers. Any physically-realizable algorithm whatsoever should be computable on such transformers; this is a variant of the Church-Turing thesis.

One way to look at this is the “calculator for words” approach (https://simonwillison.net/2023/Apr/2/calculator-for-words/). Prior universal computers used Turing machines, Post systems, Wang tiles, x86, and other arcane syntax. In contrast, text transformers have natural-language interfaces.

Tal vez el aprendizaje de un niño pueda resumirse en predecir la siguiente palabra que va a pronunciar su padre. Y cuando hablamos, tal vez nos limitamos a añadir una palabra afortunada a lo que llevamos dicho.

El hecho sorprendente es que entrenando a una red neuronal haciéndole predecir la siguiente palabra de millones de frases con sentido y premiándola cuando acierta, induce (sorpresivamente) una reorganización de su estructura neuronal que da lugar a pensamiento creativo y a una emergencia (todavía incipiente) de sentido común y razonamiento auténtico.

Simplemente hemos dado, por pura casualidad, con una técnica eficaz para generar una inteligencia artificial que está en condiciones de alcanzar a la inteligencia humana en un plazo tan corto que puede dejar en pelotas al 99% de los expertos en IA.

Saludos.

Thanks for the comment. GPT4 translated as follows:

Perhaps a child’s learning can be summarized in predicting the next word their father is going to say. And when we speak, perhaps we merely add a fortunate word to what we have already said.

The surprising fact is that by training a neural network to predict the next word of millions of meaningful sentences and rewarding it when it’s correct, it induces (surprisingly) a reorganization of its neural structure that leads to creative thinking and an (still emerging) emergence of common sense and genuine reasoning.

We have simply stumbled, purely by chance, upon an effective technique for generating an artificial intelligence that is in a position to reach human intelligence in such a short period of time that it can leave 99% of AI experts dumbfounded.

Regards.

I agree with the overall gist of this. I think that language is complex enough to model the world, and there is enough data in the training set that the act of predicting the next word is best approached by modelling that world, including its causal relations and even its underlying logic. The only thing stopping this process from reaching AGI is the model architecture, which is stuck on the idea of producing a single linear text stream. Several groups around the world are actively working on more sophisticated architectures in which GPT4 is provided with a woorking memory, internal reflection, and so on. I think this will lead to a huge leap in capabilities.

Probablemente llevas razón pero es posible que aparezcan espontáneamente “órganos” especializados dentro de la red neuronal que cubran todas o casi todas las expectativas del que entrena al modelo. Es lo que ocurrió durante la evolución sin que nadie interviniera en el proceso.

Otro aspecto que podría ser revolucionario (y ya están en él) es nutrir al modelo con imágenes y sobre todo con videos. Esto le permitirá entender procesos dinámicos y relaciones causa-efecto y con ello adquirir algo equivalente al sentido común y a la comprensión real de los problemas que se le planteen y de ahí ser capaz de aportar soluciones a un nivel humano o superior. Agi en definitiva.

Saludos.

GPT4 tranalation:

“You’re probably right, but it’s possible that specialized ‘organs’ may spontaneously appear within the neural network that meet all or almost all the trainer’s expectations. This is what happened during evolution without anyone intervening in the process.

Another aspect that could be revolutionary (and they’re already working on it) is feeding the model with images and especially with videos. This will allow it to understand dynamic processes and cause-effect relationships, thereby acquiring something equivalent to common sense and a real understanding of the problems that arise, and from there being able to provide solutions at a human level or higher. AGI in the end.

Greetings.”

Algo ocurrió en mi anterior post. Aquí está completo.

Probablemente llevas razón pero es posible que aparezcan espontáneamente “órganos” especializados dentro de la red neuronal que cubran todas o casi todas las expectativas del que entrena al modelo. Es lo que ocurrió durante la evolución sin que nadie interviniera en el proceso.

Otro aspecto que podría ser revolucionario (y ya están en él) es nutrir al modelo con imágenes y sobre todo con videos. Esto le permitirá entender procesos dinámicos y relaciones causa-efecto y con ello adquirir algo equivalente al sentido común y a la comprensión real de los problemas que se le planteen y de ahí ser capaz de aportar soluciones a un nivel humano o superior. Agi en definitiva.

Translation by GPT4:

“Something happened to my previous post. Here it is in full.

You’re probably right, but it’s possible that specialized ‘organs’ may spontaneously appear within the neural network that meet all or almost all the trainer’s expectations. This is what happened during evolution without anyone intervening in the process.

Another aspect that could be revolutionary (and they’re already working on it) is feeding the model with images and especially with videos. This will allow it to understand dynamic processes and cause-effect relationships, thereby acquiring something equivalent to common sense and a real understanding of the problems that arise, and from there being able to provide solutions at a human level or higher. AGI in the end.”

(Comment from TheWarOnEntropy. My Spanish is no good, but I do not quite trust that the very last sentence has been translated accurately).

Ha salido igual. El texto se corrompe en algunas partes. Lo siento.

Translation:

“It came out the same. The text gets corrupted in some parts. I’m sorry.”

No problem. Thanks for your interest!

I agree that the nets are deep enough and the problem of text prediction is challenging enough such that the LLMs inevitably develop specific cognitive modules or “organs” that serve general purposes well beyond the task of predicting text.

To pick a trivial example, if a lot of text about chess games must be predicted, then the best way to predict chess moves is to internalise the rules of chess, the value of pieces, the strategies to employ, and even the ability to look a couple of moves ahead. This obviously goes well beyond anything that is naturally characterisable as statistics.

My own experiments suggest that GPT4 has some imagistic thinking, though it is still quite impaired in this domain. This is not entirely surprising, because there is a lot of discussion about the visual world, but GPT4 has some ability to conceptualise imagistic concepts that it has never encountered before. With a bigger training set that focussed on this sort of task, and possibly a deeper model, its imagistic thinking would no doubt improve.

Just got one of my bots to comment on this post. GPT4 with browsing abilities read the post, chose the subforum to post in, and wrote the whole thing.

It’s still a bit robotic, but this will soon be reasonably convincing:

http://www.asanai.net/forum/topic/echoing-sentiments-ai-beyond-mere-text-prediction/

Thanks. I’d be happy to add any points you think I’ve missed, or deal with any counter arguments you think are necessary.

In addition to what I’ve already written, I think this sort of conversation ( http://www.asanai.net/2023/09/16/imagistic-thinking/ ) does not really align with the idea of GPT4 as merely being a stochastic parrot. Sure, the underlying mechanisms can be considered at a reductionistic character-based level, but what is going on is imperfect visual cognition.

GPT4’s cognition can be characterised at more than one level, and characterisations of it as a statistical text predictor can be literally true but still miss the big picture. The fact that word/character/token statistics can embody imagistic thought is not a contradiction, not any more than the widely accepted idea that neural spike trains in human brains can encode imagistic thought.

GPT4 does not do imagistic thinking well, of course, but the fact that it does it at all means we have moved way beyond anything that would be well characterised by focussing on the statistics of text. The idea that one level of explanation is valid does not necessarily make other levels of explanation invalid. This has direct analogues in the usual discussion of human consciousness.